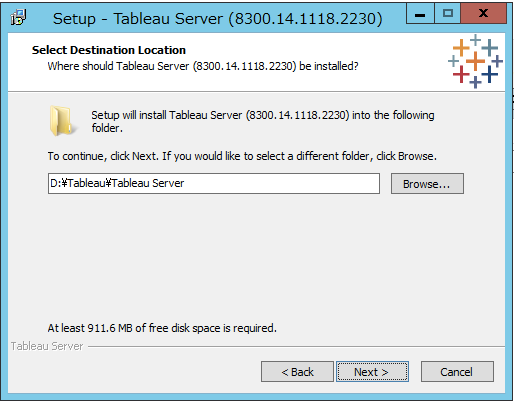

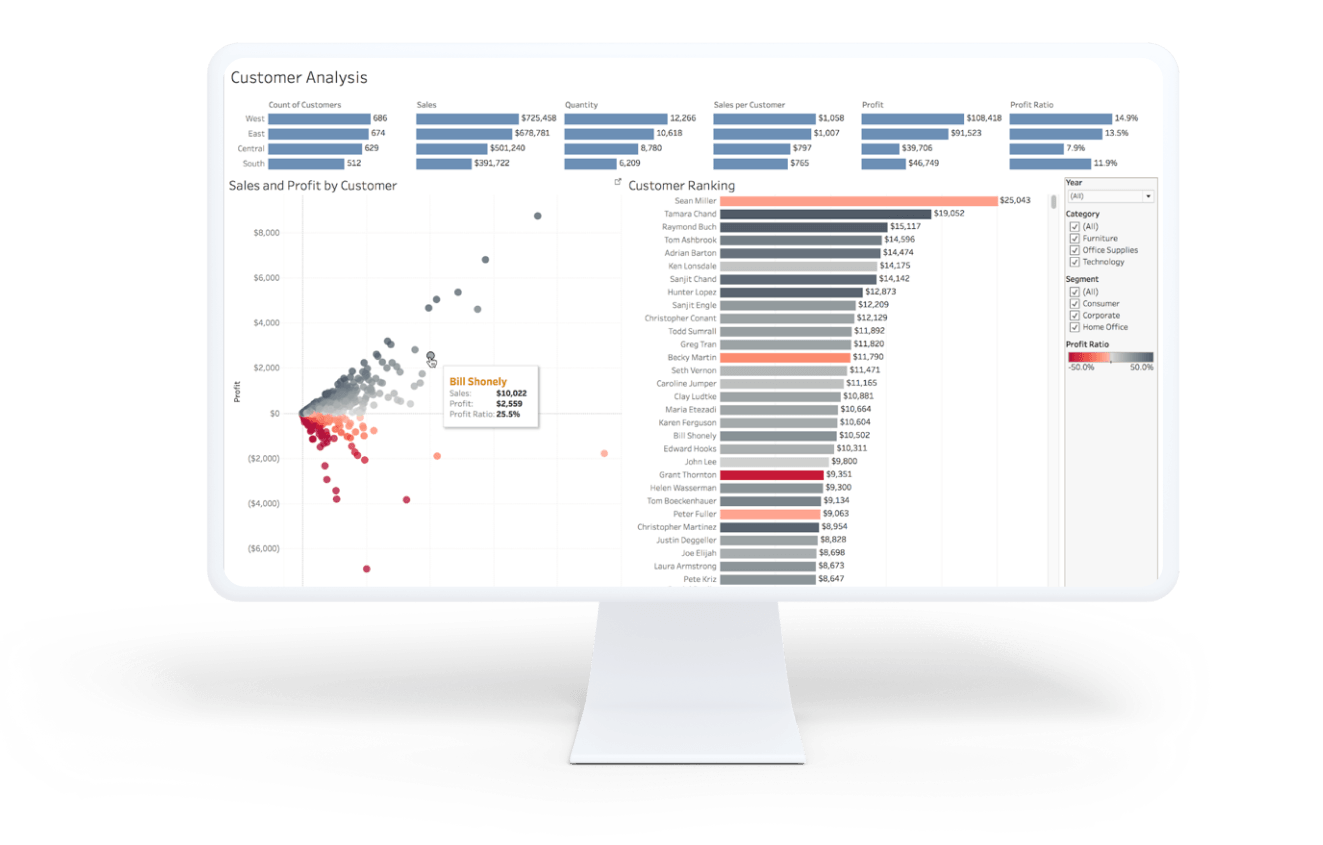

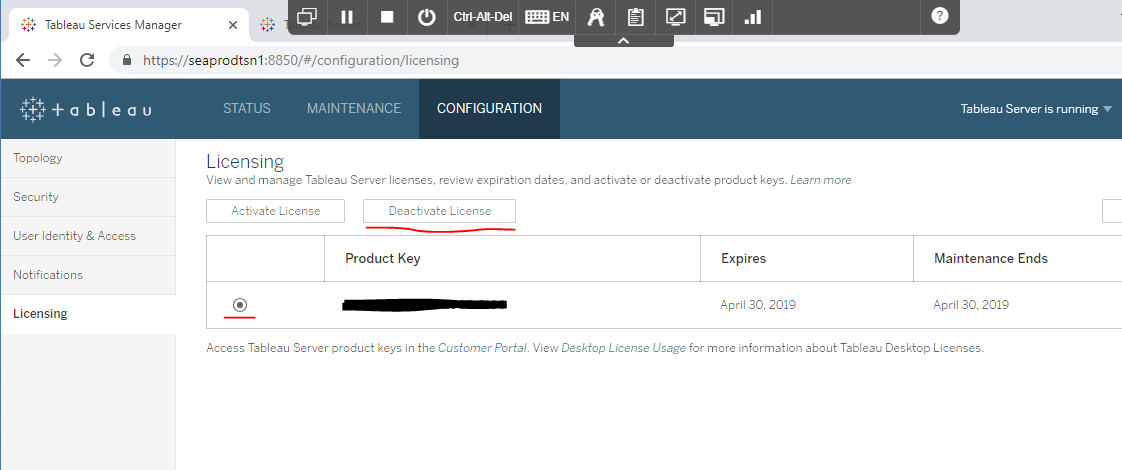

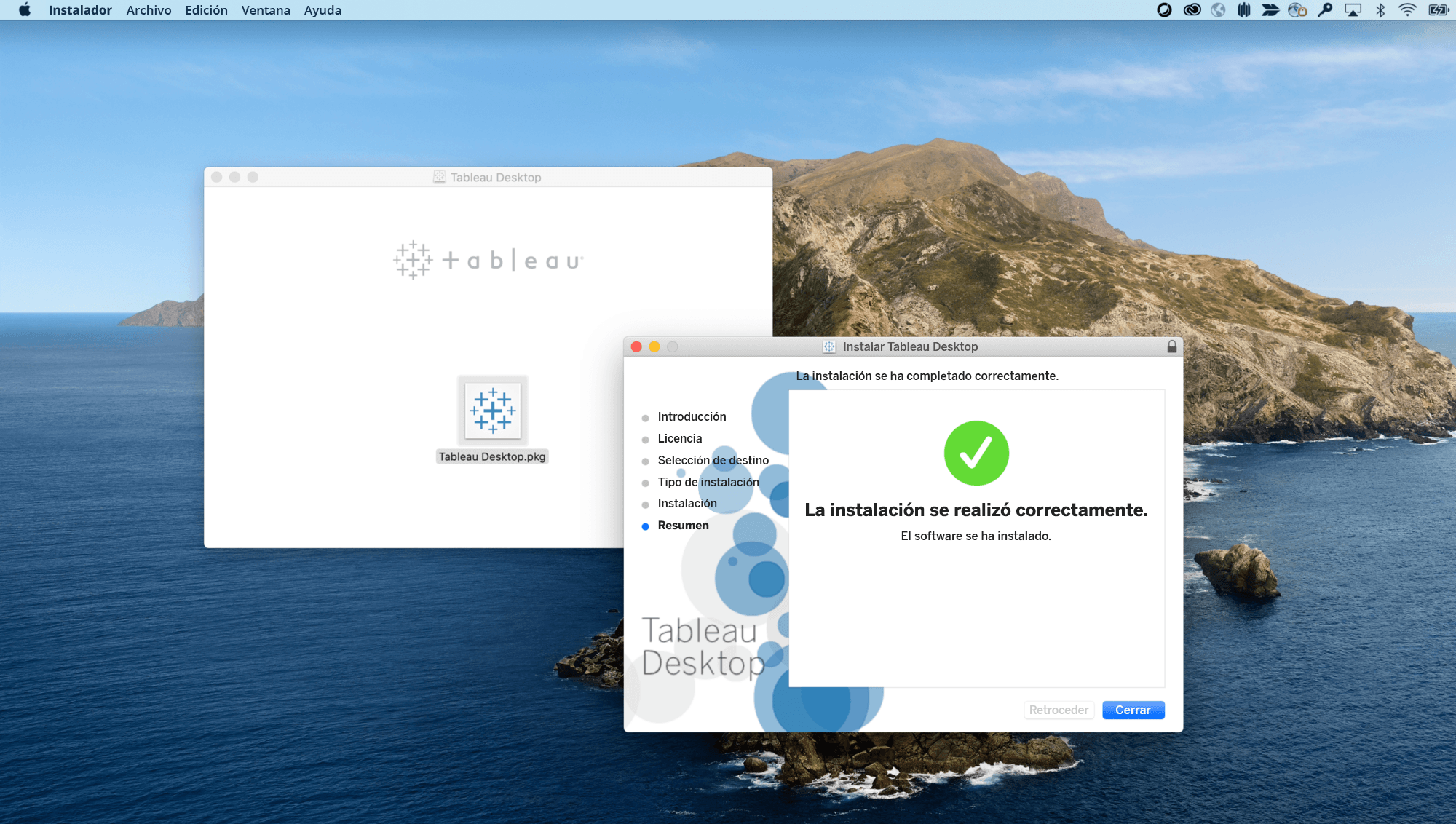

Developed Tableau workbooks involving advanced analysis techniques like Sets & Filters, Data Grouping/Visual Grouping, Data blending, Computed Sort features and report tuning fir BI Reports.Tableau server Administration - Creating users, Schedules and Subscriptions,-Server Monitoring.Experience implementing POC with Tableau Visualizations with business and user community.Proficient in publishing and distribution of Tableau content at enterprise level.Design Dashboards with best practices for optimum performance.Creating Tableau Dashboards- combining data from multiple data sources.Define Tableau Dashboard Standards and best practices.Config, sparkler for Tableau Integration, via SAML authentication.Experience with Tableau server/desktop install validation for upgrade to Tableau 9.0.Tableau - Admin and Developer Roles (5 years):.Using HIVE/PIG, we extracted the data from HDFS file systems.Migrated Teradata DW/DB historical data into Hadoop (HDFS) systems, and accessed it through Impala/Hive/Scoop.Did the Teradata SQL troubleshooting and fine tuning of scripts given by Analysts and developers.Good understanding of Data Warehousing concepts such as Third Normal form ( 3NF) as well as Dimensions, Facts and Star Schema.Having a good experience in Teradata Enterprise Data Warehouse (EDW) and Data Mart.Expert in tuning complex Teradata/ORACLE/SQL SERVER SQL Queries including Joins, Correlated Sub queries, and Scalar Sub queries.Expert in designing, developing, maintaining Relational and Multi-Sourced data sources for Analytical reporting and Tableau Visualizations, with understanding of Tableau Administration.Involved in Project Kick-off, Release Management & Status meetings to discuss testing status, configuration management, deadlines.Strong analytical & problem solving skills. Very comfortable interacting with customers and technical teams. Ability to work independently and as a team. Strong interpersonal and communication skills.Involved in all phases of implementation from Business Blueprinting, Solution Designing, Data Modeling, ETL, Report Designing and Development, Production Deployment/Support and End - User Training.Able to think critically, multitask and work under tight deadlines and rapidly changing priorities. Reporting/Visualization Senior Consultant with 5+ years of Tableau 8.1/ 8.3/9.0/10.1, Teradata DW/DB 15.10 with four Full Life Cycle Implementation experience in BI with a mixed blend of technology and management skills, mentored, managed and lead IT teams.Table.appendRows(tableData.slice(row_index, size + row_index))

Each append operation needs to be under the 128MB data limit. If you are working with a large data set, you might want to choose a larger size to improve performance.

In this example, 1000 rows are added at a time. It is a best practice to also call the reportProgress function as you add data so that Tableau can report the progress to end-users during the extract creation process.

Each row in the table is assigned an index value, represented by the row_index value. The following code uses a size variable along with the row_index to add rows of data in size increments. This is important if you are working with very large data sets. To avoid overwhelming the data pipeline, which currently has a 128MB limit per function call, you can create a while loop for the appendRows function. The format for these match version 1 of the API. Takes either an array of arrays or an array of objects which contain the actual rows of data for the extract. Called to actually append rows of data to the extract and is called in the getData function during the Gather Data Phase.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed